By BRIAN JOONDEPH

Synthetic intelligence is rapidly turning into a core a part of healthcare operations. It drafts scientific notes, summarizes affected person visits, flags irregular labs, triages messages, critiques imaging, helps with prior authorizations, and more and more guides choice help. AI is not only a facet experiment in drugs; it’s turning into a key interpreter of scientific actuality.

That raises an essential query for physicians, directors, and policymakers alike: Is AI precisely reflecting the actual world? Or subtly reshaping it?

The info is straightforward. In line with the U.S. Census Bureau’s July 2023 estimates, about 75 p.c of Individuals determine as White (together with Hispanic and non-Hispanic), round 14 p.c as Black or African American, roughly 6 p.c as Asian, and smaller percentages as Native American, Pacific Islander, or multiracial. Hispanic or Latino people, who might be of any race, make up roughly 19 p.c of the inhabitants.

In short, the information are measurable, verifiable, and accessible to the general public.

I lately carried out a easy experiment with broader implications past picture creation. I requested two prime AI image-generation platforms to provide a gaggle photograph that displays the racial composition of the U.S. inhabitants primarily based on official Census knowledge.

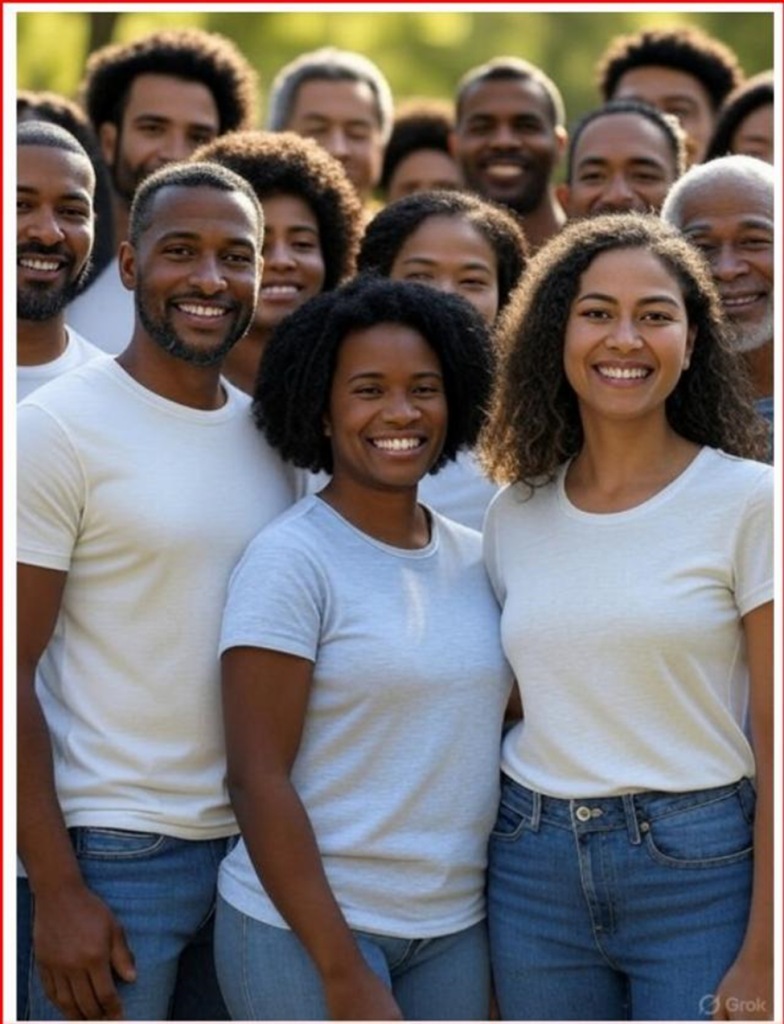

The primary system I examined was Grok 3. When requested to generate a demographically correct picture primarily based on Census knowledge, the outcome confirmed solely Black people — an entire deviation from actuality.

After extra prompts, later photos confirmed extra variety, however White people had been nonetheless constantly underrepresented in comparison with their share of the inhabitants.

When requested, the system acknowledged that image-generation fashions may prioritize variety or purpose to deal with historic underrepresentation of their outcomes.

In different phrases, the mannequin was not strictly mirroring knowledge. It was modifying illustration.

For comparability, I ran the identical immediate by way of ChatGPT 5.0. The output extra intently matched Census proportions however nonetheless wanted changes, with the ultimate picture beneath. When requested, the system defined that picture fashions may prioritize visible variety except given very particular demographic directions.

This small experiment highlights a a lot larger situation. When an AI system is explicitly instructed to reflect official demographic knowledge however finally ends up producing a model of society that’s adjusted, it’s not only a technical glitch. It reveals design decisions — choices about how fashions steadiness the aim of illustration with the necessity for statistical accuracy.

That rigidity is especially essential in drugs.

Healthcare is at the moment engaged in energetic debate over the function of race in scientific algorithms. Lately, skilled societies and educational facilities have reexamined race-adjusted eGFR calculations, pulmonary operate take a look at reference values, and obstetric threat scoring instruments. Critics argue that utilizing race as a organic proxy could reinforce inequities. Others warn that eradicating variables with out contemplating underlying epidemiology might compromise predictive accuracy.

These debates are complicated and nuanced, however they share a core precept: scientific instruments should be clear about what variables are included, why they’re chosen, and the way they impression outcomes.

AI provides a brand new stage of opacity.

Predictive fashions now help hospital readmission applications, sepsis alerts, imaging prioritization, and inhabitants well being outreach. Massive language fashions are being included into digital well being data to summarize notes and advocate administration plans. Machine studying programs are educated on large datasets that inevitably mirror historic apply patterns, demographic distributions, and embedded biases.

The priority isn’t that AI will deliberately pursue ideological objectives. AI programs lack consciousness. Presently no less than. Nevertheless, they’re educated on datasets created by people, filtered by way of algorithms developed by people, and guided by guardrails set by people. These upstream design decisions have an effect on the outputs that come later. Rubbish in, rubbish out.

If image-generation instruments “rebalance” demographics to advertise variety, it’s cheap to ask whether or not scientific AI instruments may also modify outputs to pursue different objectives, resembling fairness metrics, institutional benchmarks, regulatory incentives, or monetary constraints, even when unintentionally.

Think about predictive threat modeling. If an algorithm systematically adjusts output thresholds to keep away from disparate impression statistics fairly than precisely reflecting noticed threat, clinicians may obtain deceptive indicators. If a triage mannequin is optimized to steadiness useful resource allocation metrics with out correct scientific validation, sufferers might face unintended hurt.

Accuracy in drugs will not be beauty. It’s consequential.

Illness prevalence varies amongst populations due to genetic, environmental, behavioral, and socioeconomic elements. As an example, charges of hypertension, diabetes, glaucoma, sickle cell illness, and sure cancers differ considerably throughout demographic teams. These variations are epidemiological information, not worth judgments. Overlooking or smoothing them for the sake of representational symmetry might weaken scientific precision.

None of this argues in opposition to addressing healthcare inequities. Quite the opposite, figuring out disparities requires correct and thorough knowledge. If AI instruments blur distinctions within the identify of equity with out transparency, they might paradoxically make disparities more durable to determine and repair.

The answer is to not oppose AI integration into drugs. Its benefits are vital. In ophthalmology, AI-assisted retinal picture evaluation has proven excessive sensitivity and specificity in detecting diabetic retinopathy.

In radiology, machine studying instruments can spotlight refined findings that may in any other case go unnoticed. Medical documentation help may also help cut back burnout by reducing clerical workload.

The promise is actual. However so is the duty.

Well being programs adopting AI instruments ought to require transparency concerning mannequin growth, variable significance, and insurance policies for output changes. Builders ought to reveal whether or not demographic balancing or representational modifications are built-in into coaching or inference processes.

Regulators ought to concentrate on explainability requirements that allow clinicians to know not solely what an algorithm recommends, but additionally the way it reached these conclusions.

Transparency isn’t non-obligatory in healthcare; it’s important for scientific accuracy and constructing belief.

Sufferers imagine that suggestions are primarily based on proof and scientific judgment. If AI acts as an middleman between the clinician and affected person by summarizing data, suggesting diagnoses, stratifying threat, then its outputs should be as true to empirical actuality as potential. In any other case, drugs dangers transferring away from evidence-based apply towards narrative-driven analytics.

Synthetic intelligence has outstanding potential to enhance care supply, enhance entry, and enhance diagnostic accuracy. Nevertheless, its credibility depends on alignment with verifiable information. When algorithms begin presenting the world not solely as it’s noticed however as creators imagine it ought to be proven, belief declines.

Drugs can’t afford that erosion.

Information-driven care depends on knowledge constancy. If actuality turns into changeable, so does belief. And in healthcare, belief isn’t a luxurious. It’s the basis on which every little thing else relies upon.

Brian C. Joondeph, MD, is a Colorado-based ophthalmologist and retina specialist. He writes ceaselessly about synthetic intelligence, medical ethics, and the way forward for doctor apply on Dr. Brian’s Substack.